The Recursive Least Square Algorithm (RLS) is a powerful tool in the realm of adaptive signal processing and system identification. It is widely used for its ability to estimate the parameters of a system in real-time, making it particularly valuable in applications where the system dynamics change over time. This algorithm is an extension of the least squares method, which is used to find the best-fitting line or curve to a set of data points. However, unlike the standard least squares method, the RLS algorithm updates its estimates recursively, making it more efficient for online processing.

Understanding the Recursive Least Square Algorithm

The RLS algorithm is designed to handle time-varying systems by continuously updating the parameter estimates as new data becomes available. This recursive nature allows the algorithm to adapt to changes in the system dynamics without the need to reprocess all the data from the beginning. The key steps involved in the RLS algorithm are:

- Initialization: Start with initial estimates of the parameters and the covariance matrix.

- Prediction: Use the current parameter estimates to predict the output of the system.

- Update: Calculate the prediction error and update the parameter estimates and the covariance matrix based on the new data.

- Repeat: Continue the process for each new data point.

The RLS algorithm can be mathematically represented as follows:

Given a system with input u(t) and output y(t), the relationship can be modeled as:

y(t) = φ(t)θ + e(t)

where φ(t) is the regression vector, θ is the parameter vector, and e(t) is the prediction error. The RLS algorithm updates the parameter estimates θ(t) and the covariance matrix P(t) as follows:

K(t) = P(t-1)φ(t) / (λ + φ(t)^T P(t-1)φ(t))

θ(t) = θ(t-1) + K(t) [y(t) - φ(t)^T θ(t-1)]

P(t) = (1/λ) [P(t-1) - K(t) φ(t)^T P(t-1)]

where K(t) is the gain vector, and λ is the forgetting factor that determines how much weight is given to past data.

Applications of the Recursive Least Square Algorithm

The RLS algorithm finds applications in various fields due to its adaptive nature and real-time processing capabilities. Some of the key areas where the RLS algorithm is used include:

- System Identification: The RLS algorithm is used to identify the parameters of a system from input-output data. This is crucial in control engineering where understanding the system dynamics is essential for designing effective controllers.

- Adaptive Filtering: In signal processing, the RLS algorithm is used to design adaptive filters that can track changes in the signal characteristics. This is particularly useful in applications like noise cancellation and channel equalization.

- Communication Systems: The RLS algorithm is employed in communication systems for tasks such as channel estimation and equalization. It helps in mitigating the effects of multipath fading and interference, improving the overall performance of the communication system.

- Control Systems: In control engineering, the RLS algorithm is used for adaptive control, where the controller parameters are updated in real-time to adapt to changes in the system dynamics. This is essential in applications like robotics, aerospace, and automotive control.

Advantages and Disadvantages of the Recursive Least Square Algorithm

The RLS algorithm offers several advantages that make it a popular choice for real-time applications. However, it also has some limitations that need to be considered. Here is a detailed look at the pros and cons of the RLS algorithm:

Advantages

- Real-Time Processing: The recursive nature of the algorithm allows it to update parameter estimates in real-time, making it suitable for online processing.

- Adaptability: The RLS algorithm can adapt to changes in the system dynamics, making it robust to time-varying systems.

- Efficiency: The algorithm is computationally efficient compared to batch processing methods, as it does not require reprocessing all the data.

- Accuracy: The RLS algorithm provides accurate parameter estimates, especially when the system dynamics are well-defined.

Disadvantages

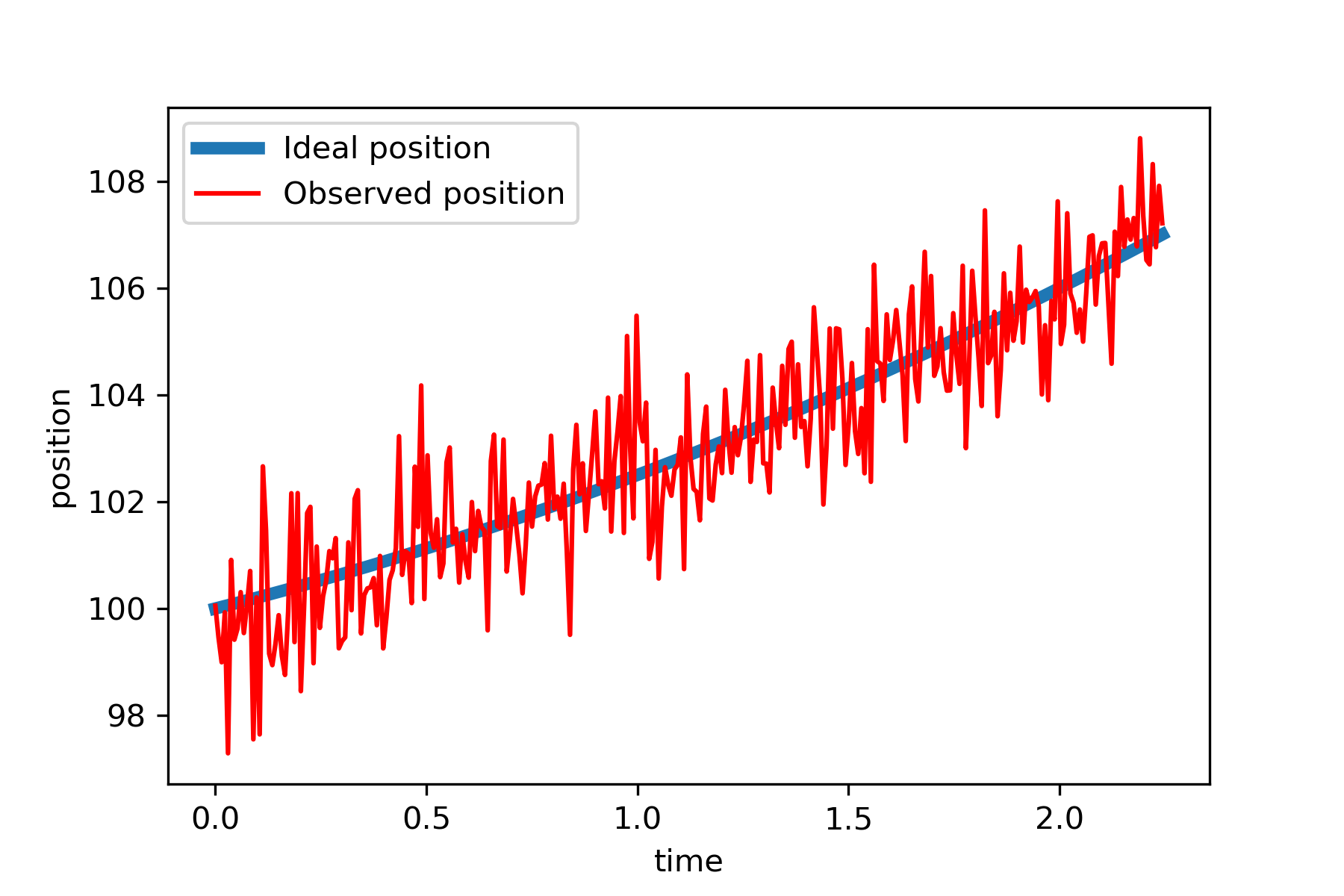

- Sensitivity to Noise: The algorithm can be sensitive to noise, especially in the presence of high levels of measurement noise.

- Computational Complexity: Although efficient, the RLS algorithm can still be computationally intensive for high-dimensional systems.

- Choice of Forgetting Factor: The performance of the algorithm is highly dependent on the choice of the forgetting factor, which can be challenging to determine.

- Initialization Sensitivity: The initial estimates of the parameters and the covariance matrix can significantly affect the convergence and performance of the algorithm.

Implementation of the Recursive Least Square Algorithm

Implementing the RLS algorithm involves several steps, including initialization, prediction, and updating the parameter estimates. Below is a step-by-step guide to implementing the RLS algorithm in Python:

First, let's import the necessary libraries:

import numpy as npNext, we define the RLS algorithm:

def rls_algorithm(u, y, theta_init, P_init, lambda_):

n = len(u)

theta = theta_init

P = P_init

theta_history = [theta]

for t in range(n):

phi = u[t]

K = np.dot(P, phi) / (lambda_ + np.dot(np.dot(phi.T, P), phi))

e = y[t] - np.dot(phi.T, theta)

theta = theta + np.dot(K, e)

P = (1/lambda_) * (P - np.dot(np.dot(K, phi.T), P))

theta_history.append(theta)

return theta_historyNow, let's define the input and output data, initial parameter estimates, and the covariance matrix:

# Example data

u = np.array([[1], [2], [3], [4], [5]])

y = np.array([2, 3, 4, 5, 6])

# Initial parameter estimates and covariance matrix

theta_init = np.array([0])

P_init = np.eye(1)

lambda_ = 0.95

# Run the RLS algorithm

theta_history = rls_algorithm(u, y, theta_init, P_init, lambda_)Finally, we can plot the parameter estimates over time to visualize the convergence of the algorithm:

📝 Note: The above implementation is a basic example. In real-world applications, the input data u and output data y would be more complex, and additional considerations such as noise handling and parameter tuning would be necessary.

Comparison with Other Adaptive Algorithms

The RLS algorithm is just one of several adaptive algorithms used in signal processing and system identification. Other popular algorithms include the Least Mean Squares (LMS) algorithm and the Kalman filter. Here is a comparison of the RLS algorithm with these alternatives:

| Algorithm | Advantages | Disadvantages |

|---|---|---|

| Recursive Least Square Algorithm | Real-time processing, adaptability, efficiency, accuracy | Sensitivity to noise, computational complexity, choice of forgetting factor, initialization sensitivity |

| Least Mean Squares (LMS) Algorithm | Simplicity, low computational complexity, robustness to noise | Slower convergence, sensitivity to step size, less accurate parameter estimates |

| Kalman Filter | Optimal estimation, handles both process and measurement noise, adaptability | Complexity, sensitivity to model assumptions, computational intensity |

The choice of algorithm depends on the specific requirements of the application, including the need for real-time processing, the complexity of the system, and the presence of noise. The RLS algorithm is particularly suitable for applications where accurate parameter estimates and adaptability are crucial.

In summary, the Recursive Least Square Algorithm is a powerful tool for real-time system identification and adaptive signal processing. Its ability to update parameter estimates recursively makes it efficient and adaptable to time-varying systems. However, it also has limitations, such as sensitivity to noise and computational complexity, which need to be considered. By understanding the advantages and disadvantages of the RLS algorithm and comparing it with other adaptive algorithms, one can make an informed decision on its suitability for a given application.

Related Terms:

- recursive least squares estimation

- online recursive least squares estimation

- least square regression polynomial

- recursive least squares estimator simulink

- application of least square method

- recursive least square method