In the realm of data science and machine learning, the concept of the Inverse Data Matrix plays a crucial role in various analytical techniques. Understanding how to compute and utilize the Inverse Data Matrix can significantly enhance the accuracy and efficiency of data-driven models. This post delves into the intricacies of the Inverse Data Matrix, its applications, and the steps involved in computing it.

Understanding the Inverse Data Matrix

The Inverse Data Matrix is a fundamental concept in linear algebra and statistics. It is essentially the inverse of a data matrix, which is a matrix representation of a dataset. The data matrix typically contains rows representing observations and columns representing features or variables. The inverse of this matrix provides insights into the relationships between these features and can be used in various statistical and machine learning algorithms.

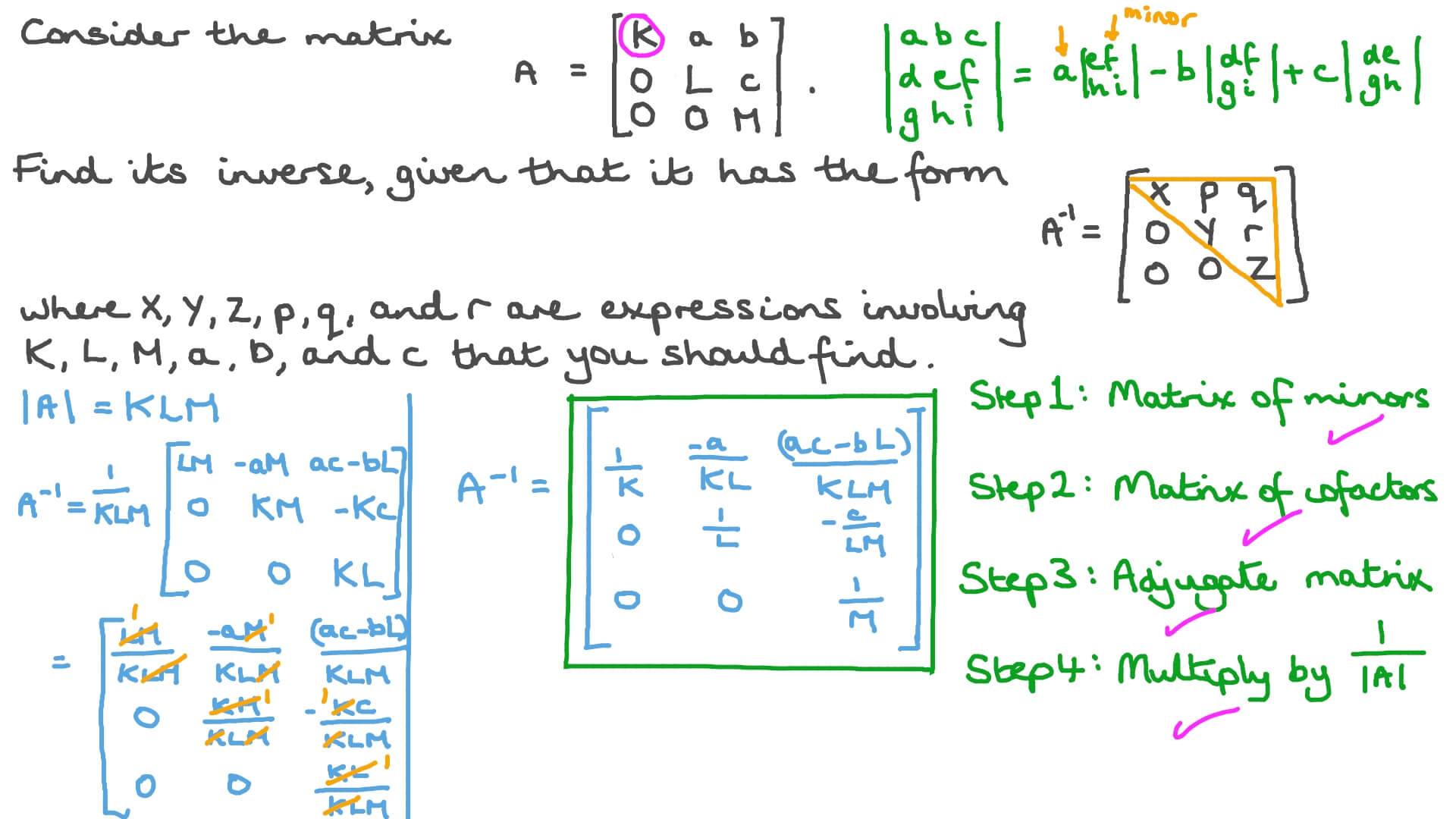

To understand the Inverse Data Matrix, it's essential to grasp the concept of matrix inversion. A matrix is invertible if it is square (i.e., it has the same number of rows and columns) and its determinant is non-zero. The inverse of a matrix A, denoted as A-1, is such that A * A-1 = I, where I is the identity matrix.

Applications of the Inverse Data Matrix

The Inverse Data Matrix has numerous applications in data science and machine learning. Some of the key areas where it is utilized include:

- Linear Regression: In linear regression, the Inverse Data Matrix is used to compute the coefficients of the regression model. The normal equation, which is used to find the best-fit line, involves the inverse of the data matrix.

- Principal Component Analysis (PCA): PCA is a dimensionality reduction technique that uses the Inverse Data Matrix to transform the data into a new coordinate system. This helps in identifying the principal components that capture the most variance in the data.

- Ridge Regression: Ridge regression is a technique used to handle multicollinearity in the data. It involves adding a penalty term to the regression equation, which can be computed using the Inverse Data Matrix.

- Bayesian Inference: In Bayesian statistics, the Inverse Data Matrix is used to update the prior distribution based on the observed data. This helps in making probabilistic inferences about the parameters of the model.

Computing the Inverse Data Matrix

Computing the Inverse Data Matrix involves several steps. Here is a detailed guide on how to compute it:

Step 1: Prepare the Data Matrix

The first step is to prepare the data matrix. This involves organizing the data into a matrix format where each row represents an observation and each column represents a feature. Ensure that the data matrix is square and invertible.

Step 2: Check for Invertibility

Before computing the inverse, it's crucial to check if the data matrix is invertible. This can be done by calculating the determinant of the matrix. If the determinant is non-zero, the matrix is invertible.

Step 3: Compute the Inverse

Once the data matrix is prepared and checked for invertibility, the next step is to compute the inverse. This can be done using various methods, including:

- Gaussian Elimination: This method involves transforming the data matrix into an upper triangular matrix and then solving for the inverse.

- LU Decomposition: This method decomposes the data matrix into a lower triangular matrix (L) and an upper triangular matrix (U). The inverse can then be computed using these matrices.

- Singular Value Decomposition (SVD): SVD decomposes the data matrix into three matrices: U, Σ, and V. The inverse can be computed using these matrices.

Here is an example of how to compute the Inverse Data Matrix using Python and the NumPy library:

import numpy as np

# Define the data matrix

data_matrix = np.array([[4, 7], [2, 6]])

# Check if the matrix is invertible

determinant = np.linalg.det(data_matrix)

if determinant != 0:

# Compute the inverse

inverse_matrix = np.linalg.inv(data_matrix)

print("Inverse Data Matrix:")

print(inverse_matrix)

else:

print("The matrix is not invertible.")

💡 Note: Ensure that the data matrix is square and invertible before computing the inverse. Non-invertible matrices can lead to errors in the computation.

Challenges and Considerations

While the Inverse Data Matrix is a powerful tool, there are several challenges and considerations to keep in mind:

- Numerical Stability: Computing the inverse of a matrix can be numerically unstable, especially for large or ill-conditioned matrices. Techniques like regularization can help mitigate this issue.

- Computational Complexity: The computational complexity of inverting a matrix is O(n3), which can be computationally expensive for large matrices. Efficient algorithms and optimizations are necessary to handle large datasets.

- Multicollinearity: In the context of linear regression, multicollinearity can make the data matrix non-invertible. Techniques like ridge regression or principal component analysis can be used to address this issue.

Advanced Techniques

Beyond the basic computation of the Inverse Data Matrix, there are advanced techniques that can enhance its utility in data science and machine learning. Some of these techniques include:

- Pseudo-Inverse: For non-square or rank-deficient matrices, the pseudo-inverse can be used. The pseudo-inverse provides a least-squares solution to the linear system, even when the matrix is not invertible.

- Regularization: Regularization techniques like ridge regression and Lasso can be used to handle ill-conditioned matrices. These techniques add a penalty term to the regression equation, making the matrix more stable and invertible.

- Iterative Methods: Iterative methods like the conjugate gradient method can be used to solve linear systems without explicitly computing the inverse. These methods are particularly useful for large and sparse matrices.

Here is an example of how to compute the pseudo-inverse using Python and the NumPy library:

import numpy as np

# Define the data matrix

data_matrix = np.array([[1, 2], [3, 4], [5, 6]])

# Compute the pseudo-inverse

pseudo_inverse = np.linalg.pinv(data_matrix)

print("Pseudo-Inverse Data Matrix:")

print(pseudo_inverse)

💡 Note: The pseudo-inverse is particularly useful for non-square or rank-deficient matrices, providing a least-squares solution to the linear system.

Real-World Applications

The Inverse Data Matrix has numerous real-world applications across various industries. Some of the key areas where it is utilized include:

- Finance: In financial modeling, the Inverse Data Matrix is used to compute the weights of a portfolio, optimize risk-return trade-offs, and perform principal component analysis on financial data.

- Healthcare: In healthcare, the Inverse Data Matrix is used to analyze patient data, predict disease outcomes, and optimize treatment plans. It helps in identifying the most significant factors affecting patient health.

- Marketing: In marketing, the Inverse Data Matrix is used to analyze customer data, predict customer behavior, and optimize marketing strategies. It helps in identifying the most effective marketing channels and tactics.

- Engineering: In engineering, the Inverse Data Matrix is used to analyze sensor data, optimize system performance, and perform structural analysis. It helps in identifying the most critical factors affecting system performance.

Here is an example of how the Inverse Data Matrix can be used in a real-world scenario:

Consider a dataset containing customer purchase data for an e-commerce company. The data matrix can be used to analyze customer behavior, predict future purchases, and optimize marketing strategies. By computing the Inverse Data Matrix, the company can identify the most significant factors affecting customer purchases and optimize their marketing efforts accordingly.

For example, the data matrix might contain features such as customer demographics, purchase history, and browsing behavior. The Inverse Data Matrix can be used to compute the coefficients of a linear regression model, which can then be used to predict future purchases and optimize marketing strategies.

Here is a table illustrating the steps involved in using the Inverse Data Matrix for customer purchase analysis:

| Step | Description |

|---|---|

| 1 | Prepare the data matrix containing customer purchase data. |

| 2 | Check if the data matrix is invertible. |

| 3 | Compute the Inverse Data Matrix. |

| 4 | Use the Inverse Data Matrix to compute the coefficients of a linear regression model. |

| 5 | Predict future purchases and optimize marketing strategies based on the model. |

💡 Note: The Inverse Data Matrix can be used in various real-world scenarios to analyze data, make predictions, and optimize strategies. It is a powerful tool in the arsenal of data scientists and machine learning practitioners.

In conclusion, the Inverse Data Matrix is a fundamental concept in data science and machine learning. It plays a crucial role in various analytical techniques and has numerous applications across different industries. Understanding how to compute and utilize the Inverse Data Matrix can significantly enhance the accuracy and efficiency of data-driven models. By following the steps outlined in this post and considering the challenges and advanced techniques, data scientists and machine learning practitioners can leverage the power of the Inverse Data Matrix to gain valuable insights from their data.

Related Terms:

- inverse matrix calculator with steps

- 3x3 inverse matrix calculator

- what is an invertible matrix

- calculation of inverse matrix

- calculating matrix inverse

- inverse of 2x2 matrix calculator